Does the term 'distributed systems' give you chills? Do you feel like you’re flying without a cockpit when observing your production environment? We’ve all experienced all that to some extent. Distributed systems are hard to tame, and having full visibility into what’s happening within them is no easy task.

One of the many layers to monitor is the network. We need to be able to answer questions like:

- Where are my services sending requests?

- Which applications are communicating?

- Is there latency in any link?

- There’s been an unexpected response from our service. From all the events it chained, where did it begin?

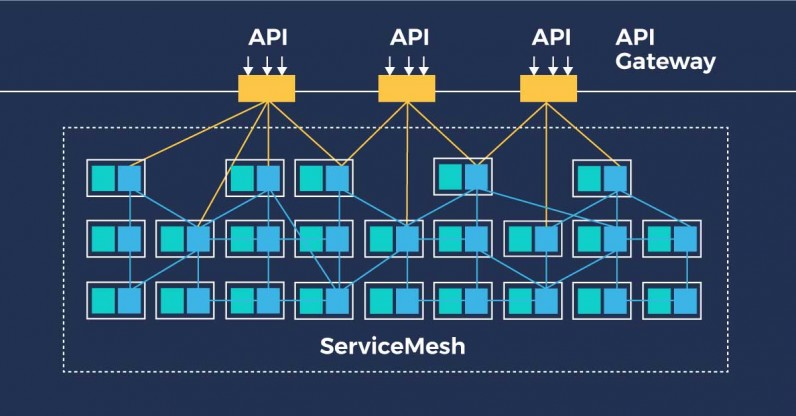

Service mesh is an additional infrastructure layer that allows us to have further control over the network by extending its features. Let's first define the base concepts, so that we understand where we are and where we want to go.

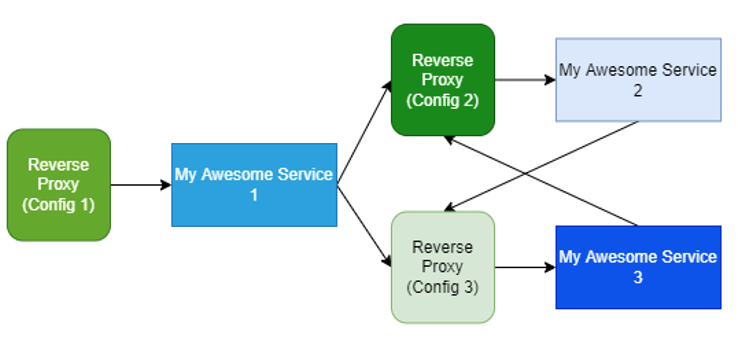

Let's first remember that a reverse proxy (Nginx, HAproxy… you name it) is software that intercepts traffic and provides TLS termination, caching, metrics etc.

These are frequent features worth decoupling from our applications for a better operation of both the environment and the business logic. Now, let's imagine that we have a set of microservices, and we want to have the aforementioned features for each one of them. We may end up with another set of reverse proxies, each one with a different configuration:

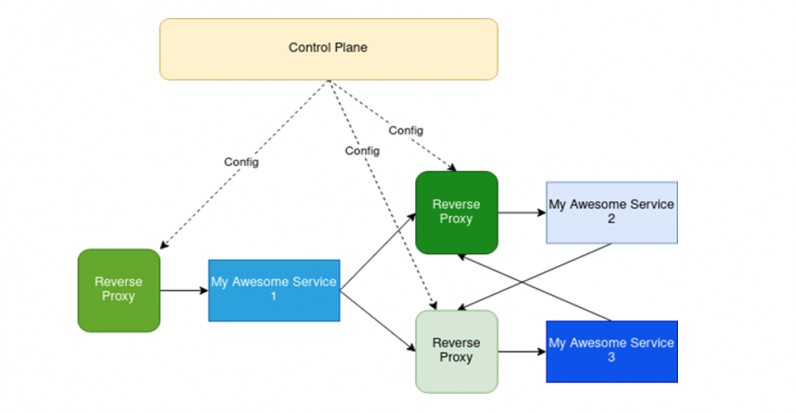

We can all agree that that is an architecture that will soon be difficult to scale and maintain. What if we place some logic that can help us operate them?

Now it looks easier to handle, right? Well, in fact, that’s what a service mesh looks like.

A service mesh is composed of 2 main components:

- A control plane, i.e., a logic that computes the configuration of all the proxies and is in charge of updating all of them.

- A data plane that does all the heavy lifting by intercepting and managing all incoming and outgoing traffic of the services.

This is the architecture; however, we’re not going to reinvent the wheel because (lucky us) there are already several solutions in the market that provide those types of features, such as: Linkerd, Consul Service Mesh or Istio. Each one of them has its pros and cons, so unfortunately for us, there’s no so-called ‘holy grail.’

Given our requirements at The Workshop, we chose Istio, which is an open-source project developed by Google and IBM. It uses Envoy as a proxy, which is developed by Lyft.

These are the feature we are currently using:

- Enforcing mutual TLS (mTLS) between our microservices i.e., both parties are authenticating each other and encrypting the traffic.

- Limiting outgoing traffic to a set of domains, discarding any other destination.

- TLS termination and HTTP to HTTPS redirection for incoming traffic.

- Custom load balancing for a specific set of applications.

This technology offers an exciting and wide range of possibilities, and we’re still studying how to improve both the security and performance of our infrastructure by taking full advantage of it.